May 2025

As the days lengthen and the summer beckons, here is my latest edu-blog filled with morsels of SEND practice, DfE reviews and research guidance.

Intrigued? Then read on….

Dyslexia

Assistant head and SENCo, Emma Saunders, has produced a super graphic that defines dyslexia. She breaks down key parts of the definition of dyslexia, highlights what these challenges might look like in the classroom, and offers brief, practical ideas for how to support them.

The updated definition of dyslexia now includes references to phonological memory/auditory memory, and working memory as these areas are often overlooked but can have a significant impact across the entire curriculum. It’s not just about reading and writing.

Qualitative or Quantitative research?

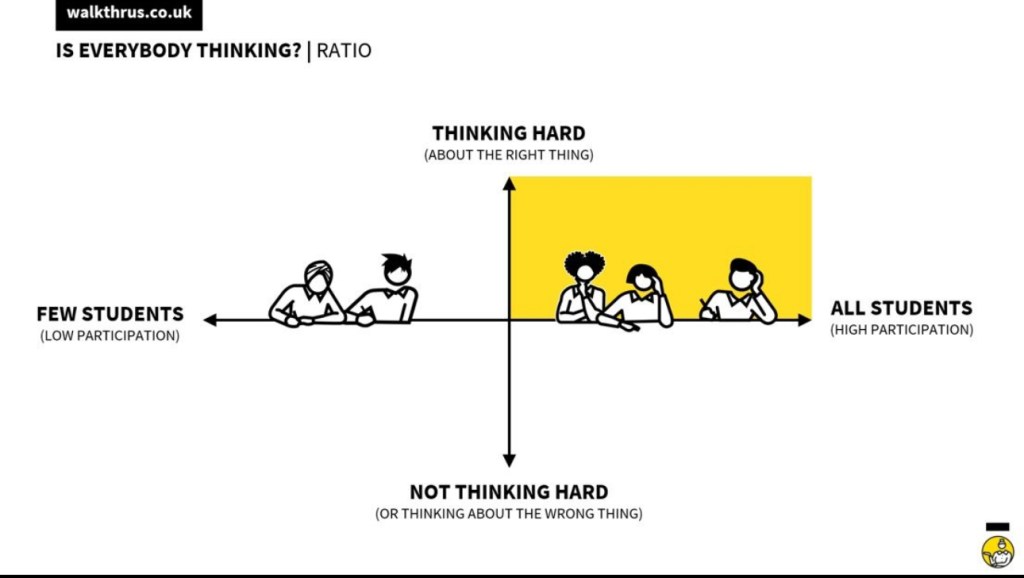

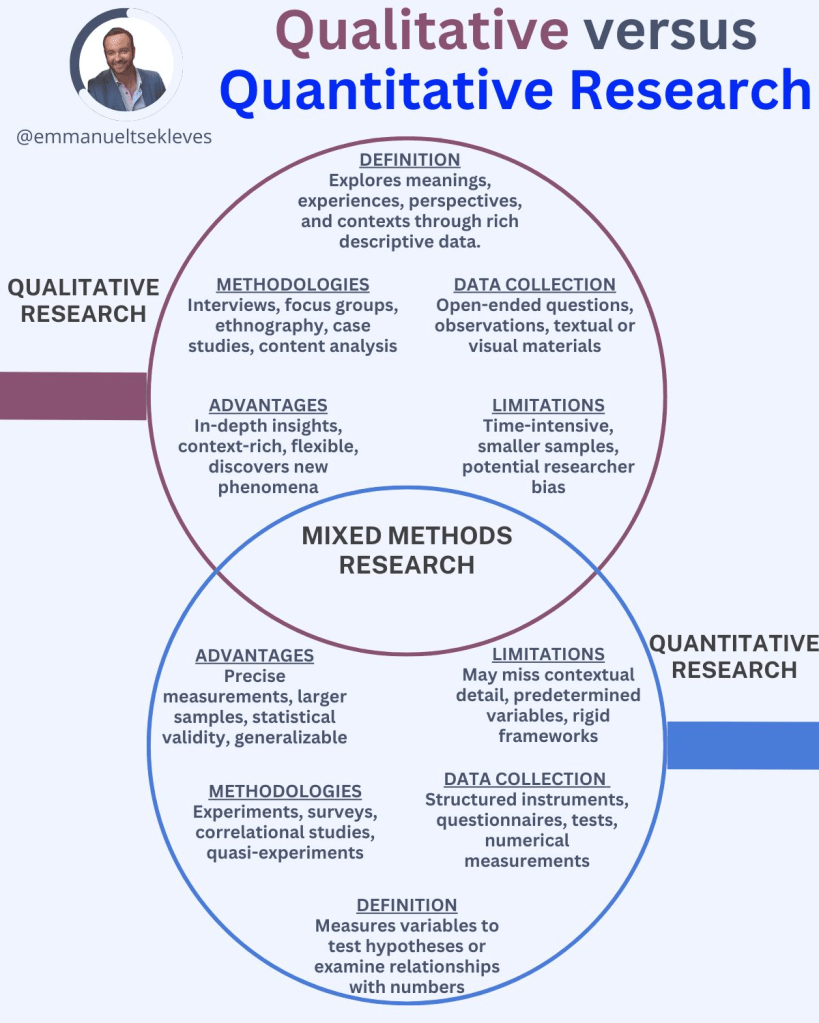

In an article on research, Emmanuael Tsekleves explores the value of and interconnection between qualitative and quantitative research.

He suggests (rightly in my opinion) that both have their place, and mastering them can set you apart. In his post, he states that:

Qualitative Research:

→ Think of it as the soul of your study. It dives deep into meanings and experiences. Uncovers the why and how of human behavior.

Quantitative Research:

→ This is the skeleton. It provides structure through measurable data. Offers the what and how much.

But what if you could blend them?

The real breakthrough comes from Mixed Methods Research.

→ It’s like weaving the soul and skeleton together. Integrating qualitative and quantitative for comprehensive insights.

The challenge?

In academia, we often choose sides. We’re conditioned to believe one is superior. But what if that’s a limitation rather than a strength?

Here’s a thought: Could the future of research be a harmonious blend? Let’s rethink our approach. Imagine the possibilities when we embrace both.

I explore the definition and benefits of both styles of research methodology in my book, Irresistible Learning – embedding a culture of research in schools. For me, there is certainly a place for a blended approach to their use in school-based research.

Here is a link to step 5 of my research cycle if you want to find out more about qualitative and quantitative research.

Behaviour and emotional regulation

In a post on LinkedIn, The Secret Behaviourist shares a valuable insight to the effective implementation of emotional regulation tools in school.

It’s all about the process. When introducing a new tool for emotional regulation tool in school… ask this first:

“Do our staff truly understand what regulation is?”

A new framework gets rolled out — Zones of Regulation, Engine’s Running, Stress Response Curve, Window of Tolerance… Posters go up. A CPD slide deck is shared. And then we expect pupils to use it without thinking about how this fits in the school’s culture, systems and the theoretical underpinning for staff, students and parents.

Effective regulation isn’t a poster or a worksheet. It’s a relational, developmental, co-constructed skill.

If the adults don’t fully understand the theoretical underpinning of the system and how this is applied in the context of their own organisation, the pupils won’t benefit from it.

Here’s what The Secret Behaviourist states what matters most:

1. 🧠 Start with the adults

If your staff can’t talk about their own regulation, they’ll struggle to support a child’s.

It’s not about oversharing — it’s about modelling calm, language, and safety.

2. 🤝 Co-regulation comes before self-regulation

Pupils need us to help them calm down.

The poster doesn’t do the work — the relationship does.

3. 🧰 Pick the right model for your setting

Some pupils connect with colours (Zones).

Others with metaphors (“engine running too fast”).

The model matters less than consistency and shared understanding.

4. 🚫 Don’t use regulation tools as behaviour management in disguise

Saying “go get back to green” might shut down the behaviour,

…but it doesn’t support the emotion.

These tools are about awareness, not compliance.

5. ⏳ Make time for it

Regulation isn’t taught in a one-off assembly.

It takes repetition, safety, and trust.

It’s not a toolkit — it’s culture.

We want children who can name, notice, and navigate their emotions.

So, finding and introducing the right emotional regulation tool starts with a key question. What is right for our students, our staff and our community?

1. Start with your staff and ensure that they know the theoretical basis of the system and how to apply this consistently across the school.

2. Build the culture so that the implementation is known and applied consistently from every member of the organisation.

3. Monitor to ensure that the system creates a climate where staff and students have the space to manage and strengthen their emotional state.

DfE releases areas of research

The DfE has published a paper outlining their drive to research key areas over the coming months and years. This links to the government’s five national missions:

• Kickstart economic growth

• Build an NHS fit for the future

• Safer Streets

• Break down the barriers to opportunity

• Make Britian a clean energy superpower

The key driver for research is aim to break the link between a child’s background and future success. They call this the Opportunity Mission. The DfE states that the opportunity mission will be delivered across four key areas or ‘mission pillars’

- Setting every child up for the best start in life. This means delivering accessible, integrated maternity, baby, and family support services through the first 1,001 days of life; and high-quality early education and childcare to set every child up for success.

2. Helping every child to achieve and thrive at school, through excellent teaching and high standards.

3. Building skills for opportunity and growth so that every young person can follow the pathway that is right for them.

4. Underpinning the other pillars is family security – ensuring every child has a safe loving home and tackling the barriers that mean too many families struggle to afford the essentials.

The research in the four pillars will focus on key areas of practice in schools:

SEND: definition, early identification and thresholds for support (see the ‘Best start in life’ and ‘Every child achieving and thriving’ sections).

Strategic Data and Analytics Capability (this links to, but is wider than, questions the ‘Technology’ section below).

Supporting parents/carers to improve their home environment and ability to provide a home learning environment (see ‘Best start in life’ sections). Understanding the role of technology for child development and wellbeing (see the ‘Technology’ section).

Supporting clear career paths, strong skills offers and economic growth (see the ‘Skills for opportunity and growth’ sections).

The document highlights the intent to focus on ways that education can increase opportunities to address disadvantage. So, with the Ofsted new framework consultation about to close, I am certain we will see a sharpening focus on disadvantage, attendance, SEMH needs, safeguarding and SEND as outlined in the new Toolkits in the consultation. A wider question is whether the DfE are now posturing to revise the national Curriculum in line with the research findings?

The full document is available here.

Supreme court’s ruling on ‘sex’

Schools Week have reported on The recent Supreme Court ruling on the legal interpretation of “sex” in the Equality Act 2010. It is clear that this will have implications across public life, including in relation to schools and other education institutions.

The article is an easy read and urges schools to consider the potential implications for staff and students in the ruling. However, it urges caution in not responding too swiftly as this may risk making decisions that have unintended consequences.

The full Schools Week article is here.

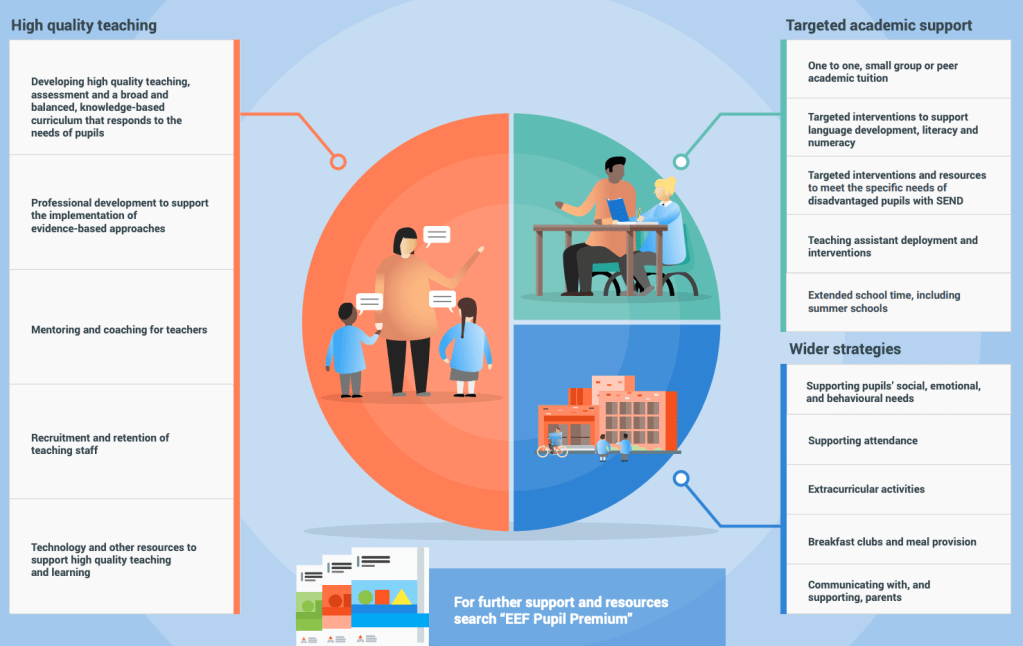

Pupil Premium and Recovery premium evaluation

The DfE are very busy and have published a document regarding the evaluation of pupil premium.

The report reflects on schools who have had discretion over how to use the funding within a broad ‘menu of approaches.’. Overall, the findings were positive. Schools felt they had a good understanding of the funding and that support was felt to be central to schools’ wider offer for disadvantaged pupils. Schools reported that funding had an important impact on pupils’ outcomes.

Key findings in the report:

- Premia-funded support was viewed as a key element of schools’ offer for disadvantaged pupils

2. Schools reported that premia funding had an important impact on pupils’ outcomes

3. Schools reported that improvements to wellbeing and attendance underpinned improvements in attainment

4. Approaches to planning and using the premia were driven by high quality data and evidence

5. Collaborative decision-making was key to planning involving leaders at every level in the school including governance

6. Schools drew on their deep knowledge of their pupils to plan effective support

7. Schools used a wide range of data sources to monitor support delivery

8. Schools adapted to pupil needs throughout the year to deliver effective support

9. Well trained staff, strong links with providers and parental engagement were key to effective implementation of support

School leaders felt that funding had a particular impact on overall wellbeing, attendance and academic outcomes. They felt that funding, especially recovery premium, had a positive impact on academic outcomes. This was particularly true for those who worked in schools with high numbers of disadvantaged pupils.

The report states that schools drew on their deep knowledge of their pupils to plan effective support and inform strategies. They identified several other factors which supported them to successfully implement support. These included the importance of having well-trained staff to deliver support to pupils, tailoring support to individual pupils, and clear communication between schools and external organisations when delivering support and aligning on desired outcomes for pupils. Finally, some school leaders emphasised the positive impact that engaging with families can have on the implementation of support.

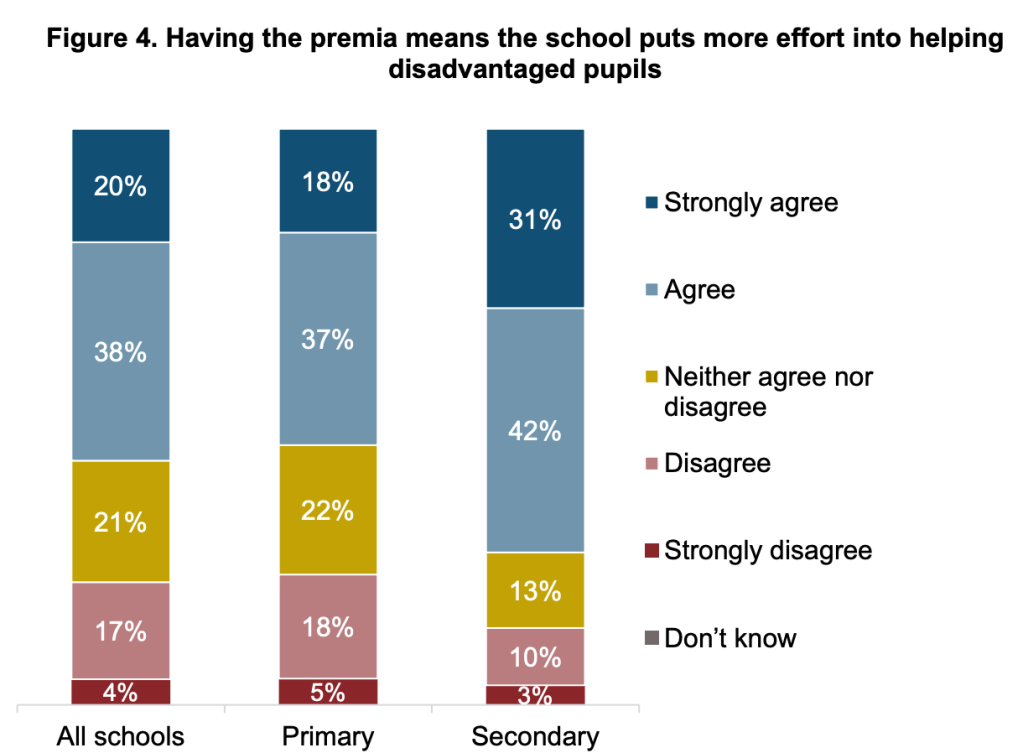

Overall, schools had positive views of the impact of the funding. Just over half of schools (57%) agreed that ‘having the fubding means the school puts more effort into helping disadvantaged pupils. One in five (21%) disagreed (see Figure 4). A larger proportion of secondary schools than primary schools agreed that having the funding means they ‘put more effort into helping disadvantaged pupils’ (74% vs. 55%). Agreement also varied according to trust status. Schools that were part of MATs were more likely to agree than maintained schools (60% vs. 55%).

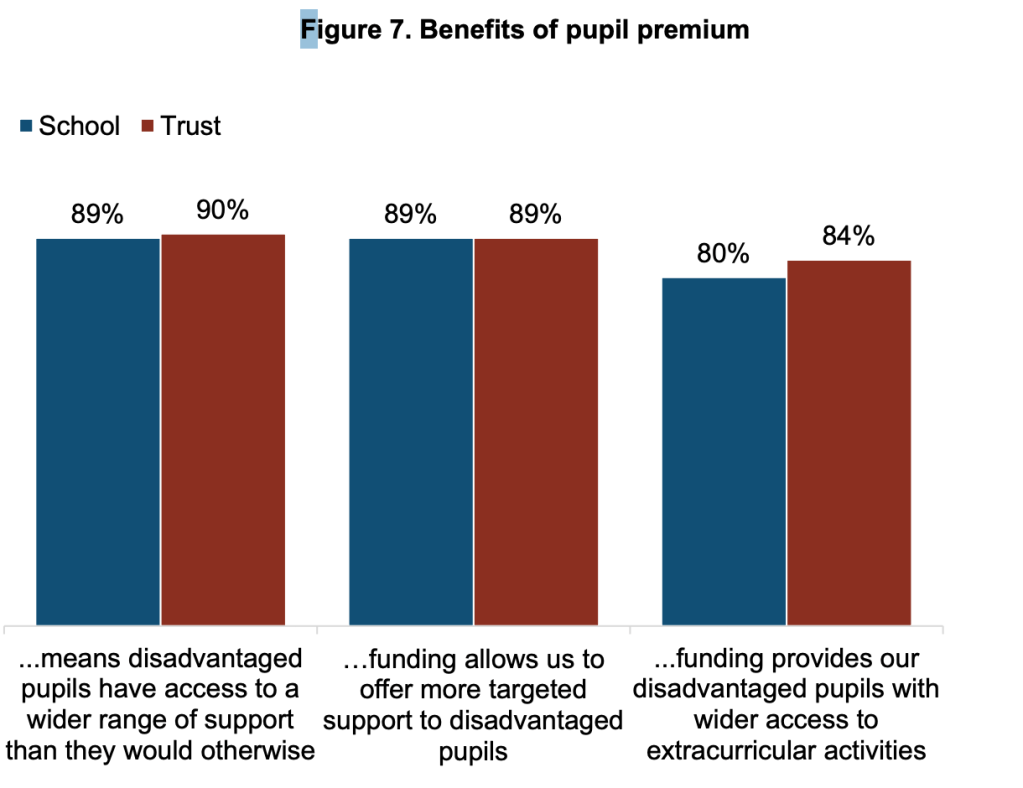

The majority of both schools and trusts agreed that the funding allows them ‘to offer more targeted support to disadvantaged pupils’ (both 89%). Eight in ten schools (80%) and 84% of trusts agreed that the funding provides disadvantaged pupils with wider access to extracurricular activities.

So, with the positive output from this research evaluation on the impact of the pupil premium and recovery premium funding, the focus moving forward from the DFE on the research into disadvantage, couple this with the tighter focus on disadvantaged pupils in the new Ofsted toolkits, it looks like a tenacious focus on improving life changes for disadvantaged pupils remains very much in scope for us in schools.

The full document can be found here.

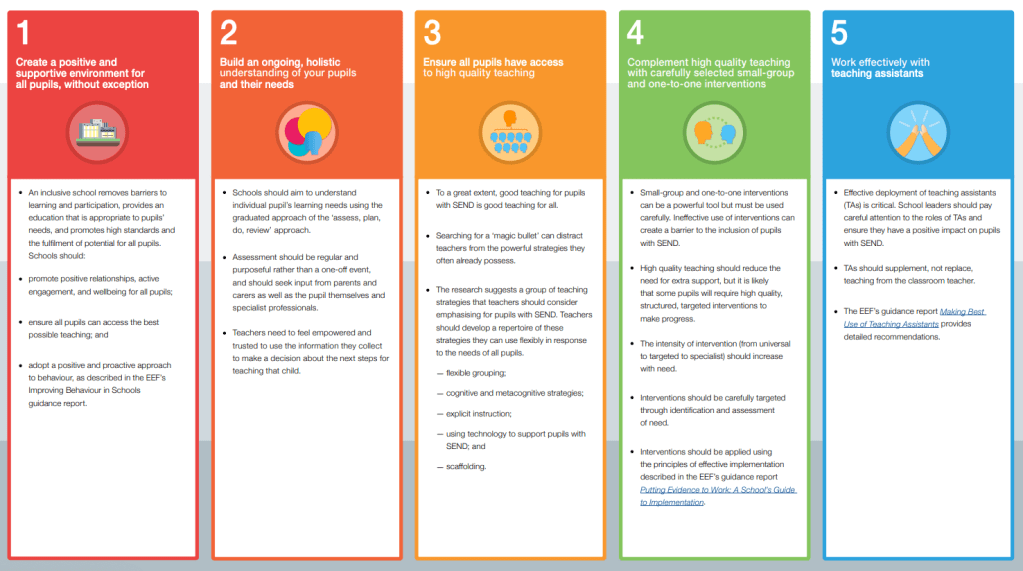

Five Principles for Inclusion –

The Confederation of School Trusts have produced an interesting paper entitled Five principles for inclusion. The paper outlines a fresh outlook to approaching SEND and makes an interesting read. The five principles are:

Dignity, not deficit Difference and disability are normal aspects of humanity – the education of children with SEND should be characterised by dignity and high expectation, not deficit and medicalisation.

Greater complexity merits greater expertise All children deserve a high-quality education – where extra support is needed, it should be expert in nature.

Different, but not apart Encountering difference builds an inclusive society – children with different learning needs should be able to grow up together.

Success in all its forms Success takes many forms – we should value and celebrate a wide range of achievements, including different ways of participating in society.

Action at all levels Change happens from the bottom-up as well as top-down – everyone has the agency and a responsibility to act.

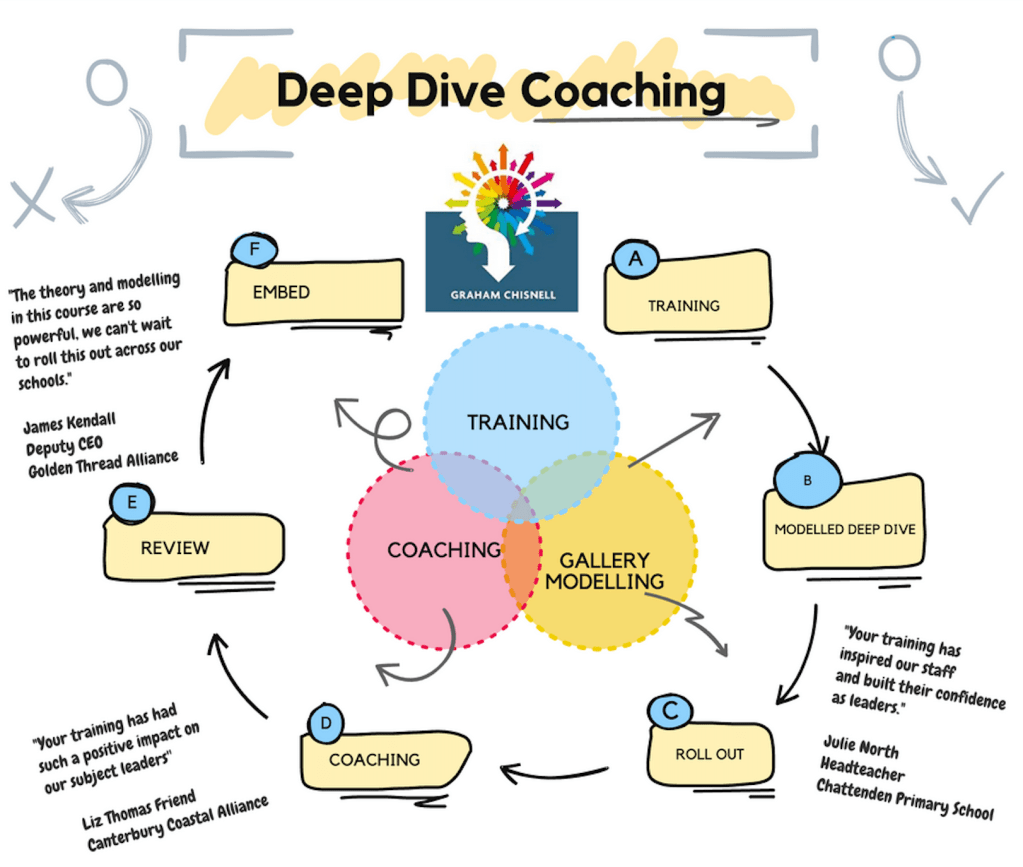

Get in touch

If you would like any bespoke support with coaching, leadership training, safeguarding reviews, research practice, please get in touch for a chat. Here is a synopsis of my consultancy offer and contact details.